Red Hat Enterprise Linux 8

Developing C and C++ applications in RHEL 8

Setting up a developer workstation, and developing and debugging C and C++

applications in Red Hat Enterprise Linux 8

Last Updated: 2024-07-08

Red Hat Enterprise Linux 8 Developing C and C++ applications in RHEL 8

Setting up a developer workstation, and developing and debugging C and C++ applications in Red

Hat Enterprise Linux 8

Legal Notice

Copyright © 2024 Red Hat, Inc.

The text of and illustrations in this document are licensed by Red Hat under a Creative Commons

Attribution–Share Alike 3.0 Unported license ("CC-BY-SA"). An explanation of CC-BY-SA is

available at

http://creativecommons.org/licenses/by-sa/3.0/

. In accordance with CC-BY-SA, if you distribute this document or an adaptation of it, you must

provide the URL for the original version.

Red Hat, as the licensor of this document, waives the right to enforce, and agrees not to assert,

Section 4d of CC-BY-SA to the fullest extent permitted by applicable law.

Red Hat, Red Hat Enterprise Linux, the Shadowman logo, the Red Hat logo, JBoss, OpenShift,

Fedora, the Infinity logo, and RHCE are trademarks of Red Hat, Inc., registered in the United States

and other countries.

Linux ® is the registered trademark of Linus Torvalds in the United States and other countries.

Java ® is a registered trademark of Oracle and/or its affiliates.

XFS ® is a trademark of Silicon Graphics International Corp. or its subsidiaries in the United States

and/or other countries.

MySQL ® is a registered trademark of MySQL AB in the United States, the European Union and

other countries.

Node.js ® is an official trademark of Joyent. Red Hat is not formally related to or endorsed by the

official Joyent Node.js open source or commercial project.

The OpenStack ® Word Mark and OpenStack logo are either registered trademarks/service marks

or trademarks/service marks of the OpenStack Foundation, in the United States and other

countries and are used with the OpenStack Foundation's permission. We are not affiliated with,

endorsed or sponsored by the OpenStack Foundation, or the OpenStack community.

All other trademarks are the property of their respective owners.

Abstract

Use the different features and utilities available in Red Hat Enterprise Linux 8 to develop and debug

C and C++ applications.

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

Table of Contents

PROVIDING FEEDBACK ON RED HAT DOCUMENTATION

CHAPTER 1. SETTING UP A DEVELOPMENT WORKSTATION

1.1. PREREQUISITES

1.2. ENABLING DEBUG AND SOURCE REPOSITORIES

1.3. SETTING UP TO MANAGE APPLICATION VERSIONS

1.4. SETTING UP TO DEVELOP APPLICATIONS USING C AND C++

1.5. SETTING UP TO DEBUG APPLICATIONS

1.6. SETTING UP TO MEASURE PERFORMANCE OF APPLICATIONS

CHAPTER 2. CREATING C OR C++ APPLICATIONS

2.1. BUILDING CODE WITH GCC

2.1.1. Relationship between code forms

2.1.2. Compiling source files to object code

2.1.3. Enabling debugging of C and C++ applications with GCC

2.1.4. Code optimization with GCC

2.1.5. Options for hardening code with GCC

2.1.6. Linking code to create executable files

2.1.7. Example: Building a C program with GCC (compiling and linking in one step)

2.1.8. Example: Building a C program with GCC (compiling and linking in two steps)

2.1.9. Example: Building a C++ program with GCC (compiling and linking in one step)

2.1.10. Example: Building a C++ program with GCC (compiling and linking in two steps)

2.2. USING LIBRARIES WITH GCC

2.2.1. Library naming conventions

2.2.2. Static and dynamic linking

2.2.3. Using a library with GCC

2.2.4. Using a static library with GCC

2.2.5. Using a dynamic library with GCC

2.2.6. Using both static and dynamic libraries with GCC

2.3. CREATING LIBRARIES WITH GCC

2.3.1. Library naming conventions

2.3.2. The soname mechanism

2.3.3. Creating dynamic libraries with GCC

2.3.4. Creating static libraries with GCC and ar

2.4. MANAGING MORE CODE WITH MAKE

2.4.1. GNU make and Makefile overview

2.4.2. Example: Building a C program using a Makefile

2.4.3. Documentation resources for make

2.5. CHANGES IN TOOLCHAIN SINCE RHEL 7

2.5.1. Changes in GCC in RHEL 8

2.5.2. Security enhancements in GCC in RHEL 8

2.5.3. Compatibility-breaking changes in GCC in RHEL 8

C++ ABI change in std::string and std::list

GCC no longer builds Ada, Go, and Objective C/C++ code

CHAPTER 3. DEBUGGING APPLICATIONS

3.1. ENABLING DEBUGGING WITH DEBUGGING INFORMATION

3.1.1. Debugging information

3.1.2. Enabling debugging of C and C++ applications with GCC

3.1.3. Debuginfo and debugsource packages

3.1.4. Getting debuginfo packages for an application or library using GDB

3.1.5. Getting debuginfo packages for an application or library manually

5

6

6

6

6

7

8

8

10

10

10

11

11

12

13

13

14

15

16

16

17

17

18

19

20

21

23

24

24

24

26

26

27

28

29

30

31

31

33

36

36

36

37

37

37

37

38

39

40

Table of Contents

1

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

3.2. INSPECTING APPLICATION INTERNAL STATE WITH GDB

3.2.1. GNU debugger (GDB)

3.2.2. Attaching GDB to a process

3.2.3. Stepping through program code with GDB

3.2.4. Showing program internal values with GDB

3.2.5. Using GDB breakpoints to stop execution at defined code locations

3.2.6. Using GDB watchpoints to stop execution on data access and changes

3.2.7. Debugging forking or threaded programs with GDB

3.3. RECORDING APPLICATION INTERACTIONS

3.3.1. Tools useful for recording application interactions

3.3.2. Monitoring an application’s system calls with strace

3.3.3. Monitoring application’s library function calls with ltrace

3.3.4. Monitoring application’s system calls with SystemTap

3.3.5. Using GDB to intercept application system calls

3.3.6. Using GDB to intercept handling of signals by applications

3.4. DEBUGGING A CRASHED APPLICATION

3.4.1. Core dumps: what they are and how to use them

3.4.2. Recording application crashes with core dumps

3.4.3. Inspecting application crash states with core dumps

3.4.4. Creating and accessing a core dump with coredumpctl

3.4.5. Dumping process memory with gcore

3.4.6. Dumping protected process memory with GDB

3.5. COMPATIBILITY-BREAKING CHANGES IN GDB

GDBserver now starts inferiors with shell

gcj support removed

New syntax for symbol dumping maintenance commands

Thread numbers are no longer global

Memory for value contents can be limited

Sun version of stabs format no longer supported

Sysroot handling changes

HISTSIZE no longer controls GDB command history size

Completion limiting added

HP-UX XDB compatibility mode removed

Handling signals for threads

Breakpoint modes always-inserted off and auto merged

remotebaud commands no longer supported

3.6. DEBUGGING APPLICATIONS IN CONTAINERS

CHAPTER 4. ADDITIONAL TOOLSETS FOR DEVELOPMENT

4.1. USING GCC TOOLSET

4.1.1. What is GCC Toolset

4.1.2. Installing GCC Toolset

4.1.3. Installing individual packages from GCC Toolset

4.1.4. Uninstalling GCC Toolset

4.1.5. Running a tool from GCC Toolset

4.1.6. Running a shell session with GCC Toolset

4.1.7. Additional resources

4.2. GCC TOOLSET 9

4.2.1. Tools and versions provided by GCC Toolset 9

4.2.2. C++ compatibility in GCC Toolset 9

4.2.3. Specifics of GCC in GCC Toolset 9

4.2.4. Specifics of binutils in GCC Toolset 9

4.3. GCC TOOLSET 10

41

41

42

43

44

45

46

47

48

49

50

51

53

53

54

55

55

55

57

59

60

61

62

62

62

62

63

63

64

64

64

64

64

64

64

65

65

68

68

68

68

68

69

69

69

69

69

69

70

71

72

72

Red Hat Enterprise Linux 8 Developing C and C++ applications in RHEL 8

2

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

4.3.1. Tools and versions provided by GCC Toolset 10

4.3.2. C++ compatibility in GCC Toolset 10

4.3.3. Specifics of GCC in GCC Toolset 10

4.3.4. Specifics of binutils in GCC Toolset 10

4.4. GCC TOOLSET 11

4.4.1. Tools and versions provided by GCC Toolset 11

4.4.2. C++ compatibility in GCC Toolset 11

4.4.3. Specifics of GCC in GCC Toolset 11

4.4.4. Specifics of binutils in GCC Toolset 11

4.5. GCC TOOLSET 12

4.5.1. Tools and versions provided by GCC Toolset 12

4.5.2. C++ compatibility in GCC Toolset 12

4.5.3. Specifics of GCC in GCC Toolset 12

4.5.4. Specifics of binutils in GCC Toolset 12

4.5.5. Specifics of annobin in GCC Toolset 12

4.6. GCC TOOLSET 13

4.6.1. Tools and versions provided by GCC Toolset 13

4.6.2. C++ compatibility in GCC Toolset 13

4.6.3. Specifics of GCC in GCC Toolset 13

4.6.4. Specifics of binutils in GCC Toolset 13

4.6.5. Specifics of annobin in GCC Toolset 13

4.7. USING THE GCC TOOLSET CONTAINER IMAGE

4.7.1. GCC Toolset container image contents

4.7.2. Accessing and running the GCC Toolset container image

4.7.3. Example: Using the GCC Toolset 13 Toolchain container image

4.8. COMPILER TOOLSETS

4.9. THE ANNOBIN PROJECT

4.9.1. Using the annobin plugin

4.9.1.1. Enabling the annobin plugin

4.9.1.2. Passing options to the annobin plugin

4.9.2. Using the annocheck program

4.9.2.1. Using annocheck to examine files

4.9.2.2. Using annocheck to examine directories

4.9.2.3. Using annocheck to examine RPM packages

4.9.2.4. Using annocheck extra tools

4.9.2.4.1. Enabling the built-by tool

4.9.2.4.2. Enabling the notes tool

4.9.2.4.3. Enabling the section-size tool

4.9.2.4.4. Hardening checker basics

4.9.2.4.4.1. Hardening checker options

4.9.2.4.4.2. Disabling the hardening checker

4.9.3. Removing redundant annobin notes

4.9.4. Specifics of annobin in GCC Toolset 12

CHAPTER 5. SUPPLEMENTARY TOPICS

5.1. COMPATIBILITY-BREAKING CHANGES IN COMPILERS AND DEVELOPMENT TOOLS

librtkaio removed

Sun RPC and NIS interfaces removed from glibc

The nosegneg libraries for 32-bit Xen have been removed

make new operator != causes a different interpretation of certain existing makefile syntax

Valgrind library for MPI debugging support removed

Development headers and static libraries removed from valgrind-devel

5.2. OPTIONS FOR RUNNING A RHEL 6 OR 7 APPLICATION ON RHEL 8

72

73

74

75

75

75

76

77

78

78

78

79

80

80

81

82

82

82

83

84

84

85

85

85

86

87

87

88

88

88

89

89

90

90

91

91

91

92

92

92

93

93

93

95

95

95

95

95

95

96

96

96

Table of Contents

3

Red Hat Enterprise Linux 8 Developing C and C++ applications in RHEL 8

4

PROVIDING FEEDBACK ON RED HAT DOCUMENTATION

We appreciate your feedback on our documentation. Let us know how we can improve it.

Submitting feedback through Jira (account required)

1. Log in to the Jira website.

2. Click Create in the top navigation bar.

3. Enter a descriptive title in the Summary field.

4. Enter your suggestion for improvement in the Description field. Include links to the relevant

parts of the documentation.

5. Click Create at the bottom of the dialogue.

PROVIDING FEEDBACK ON RED HAT DOCUMENTATION

5

CHAPTER 1. SETTING UP A DEVELOPMENT WORKSTATION

Red Hat Enterprise Linux 8 supports development of custom applications. To allow developers to do so,

the system must be set up with the required tools and utilities. This chapter lists the most common use

cases for development and the items to install.

1.1. PREREQUISITES

The system must be installed, including a graphical environment, and subscribed.

1.2. ENABLING DEBUG AND SOURCE REPOSITORIES

A standard installation of Red Hat Enterprise Linux does not enable the debug and source repositories.

These repositories contain information needed to debug the system components and measure their

performance.

Procedure

Enable the source and debug information package channels:

# subscription-manager repos --enable rhel-8-for-$(uname -i)-baseos-debug-rpms

# subscription-manager repos --enable rhel-8-for-$(uname -i)-baseos-source-rpms

# subscription-manager repos --enable rhel-8-for-$(uname -i)-appstream-debug-rpms

# subscription-manager repos --enable rhel-8-for-$(uname -i)-appstream-source-rpms

The $(uname -i) part is automatically replaced with a matching value for architecture of your

system:

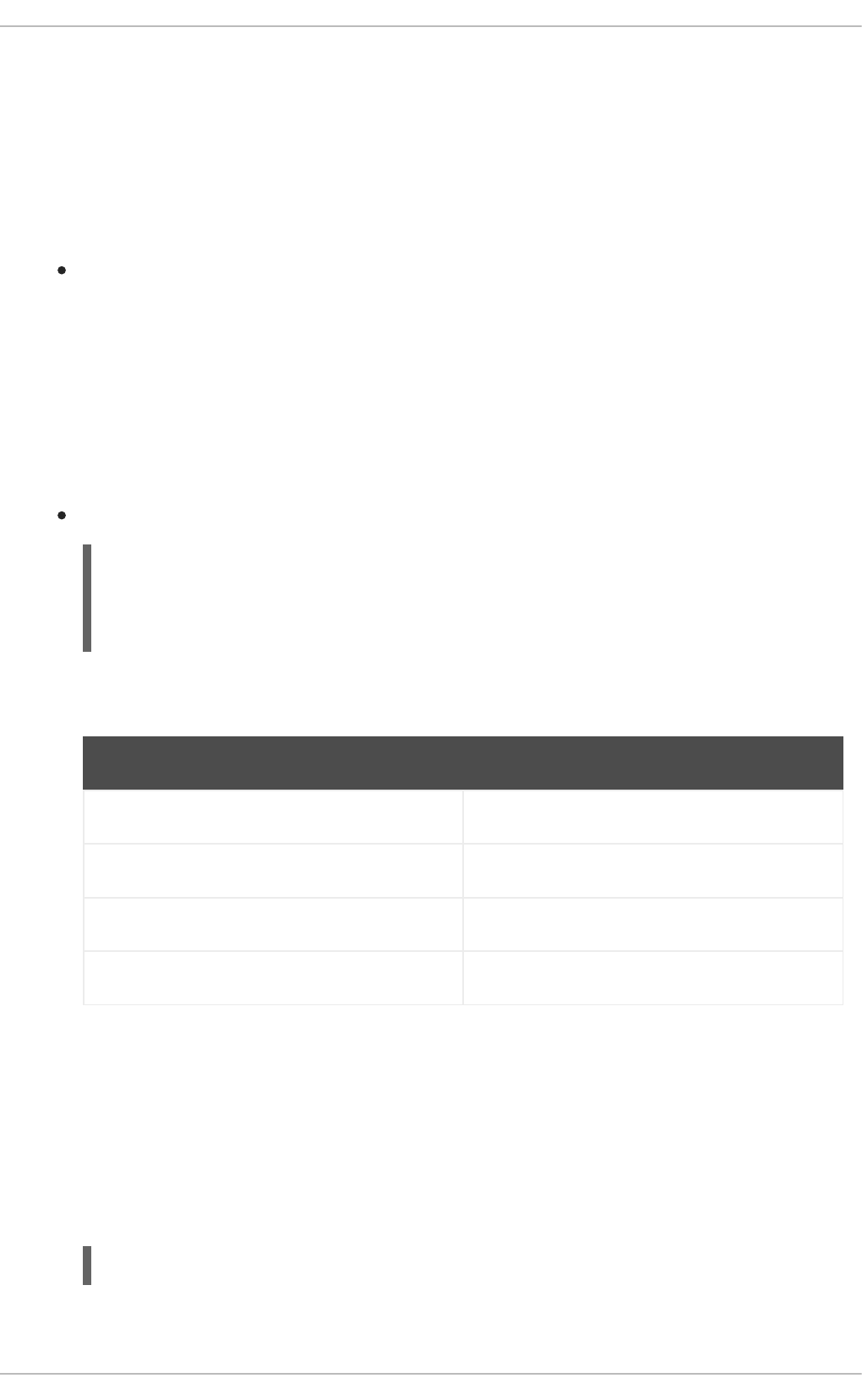

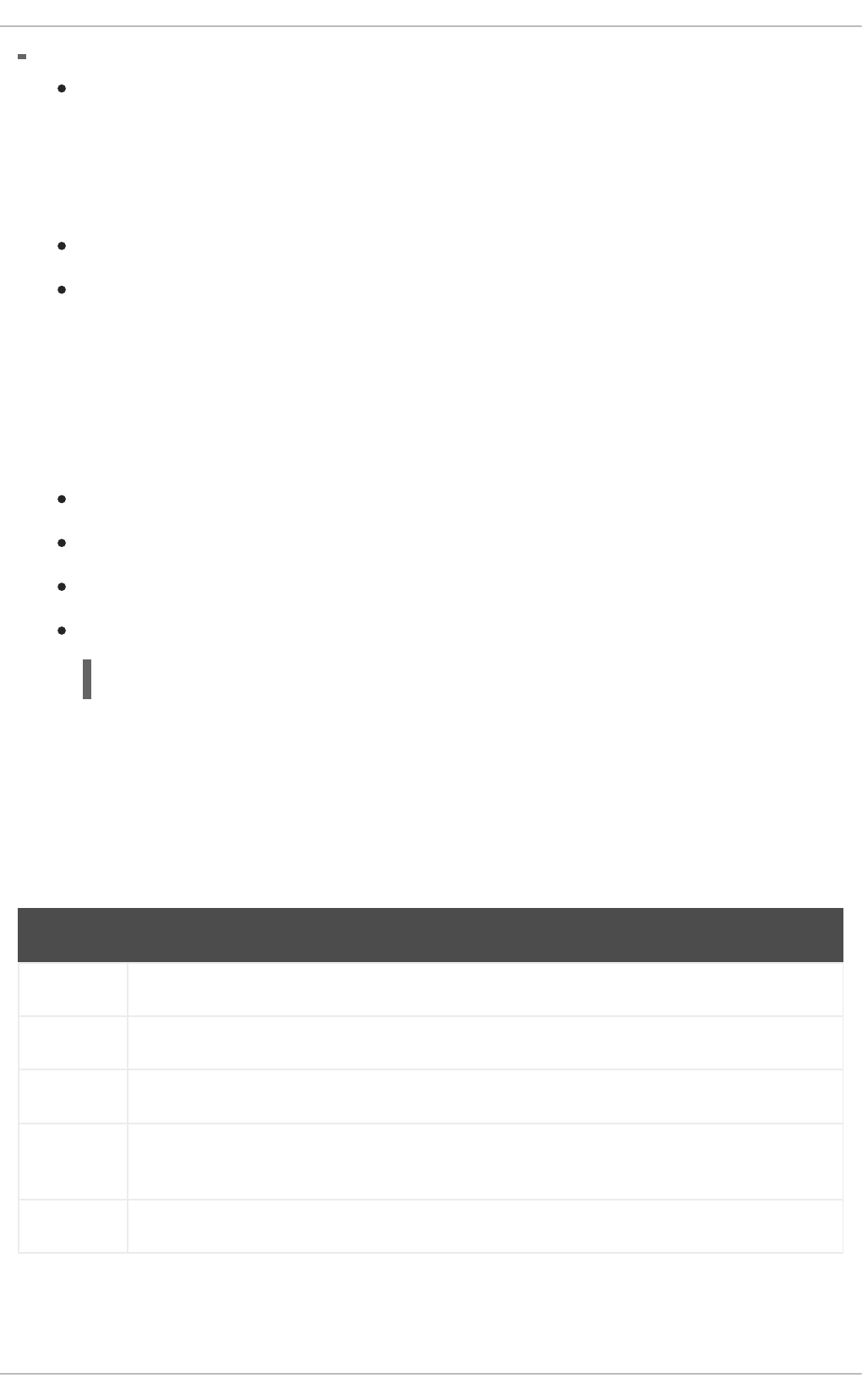

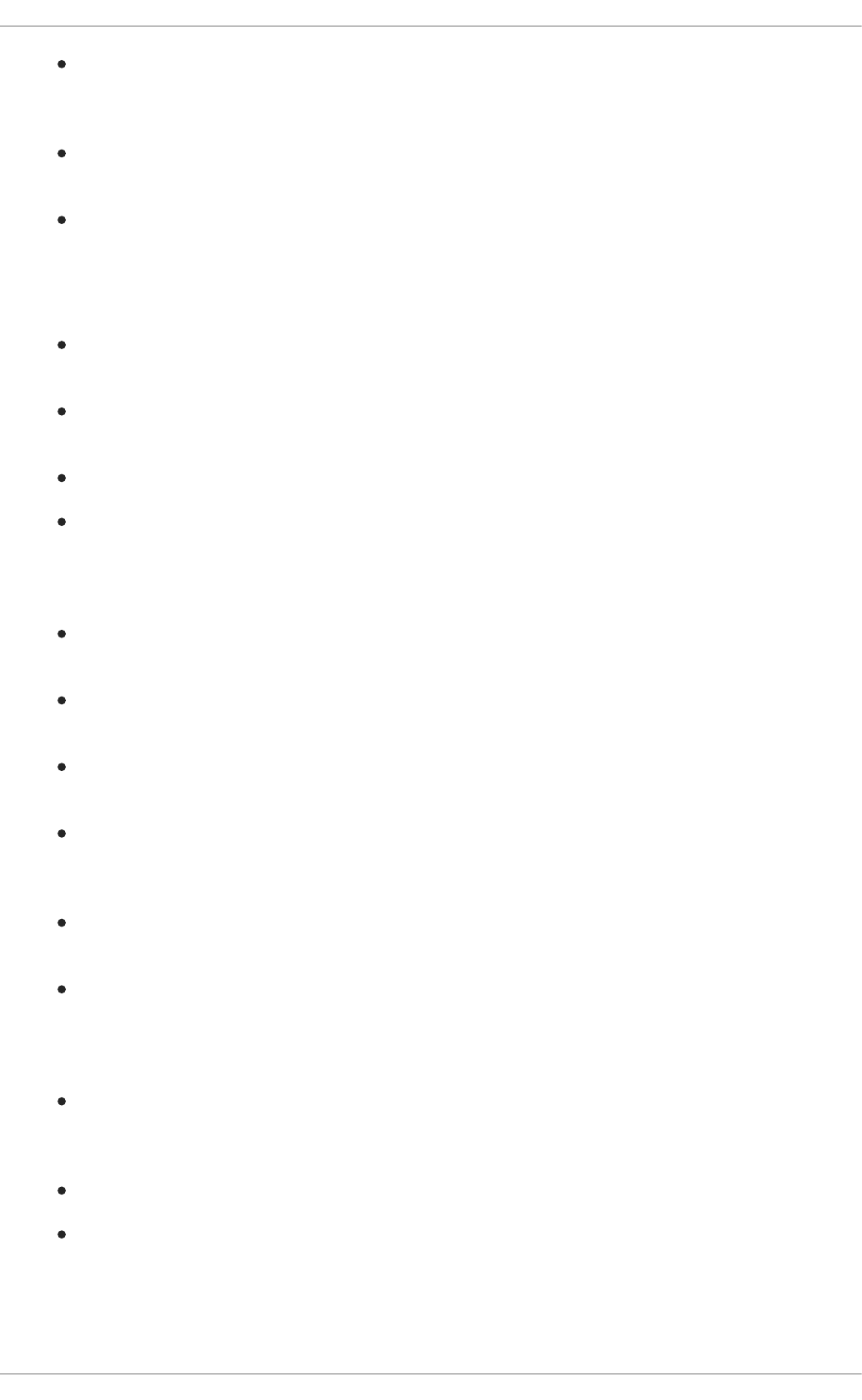

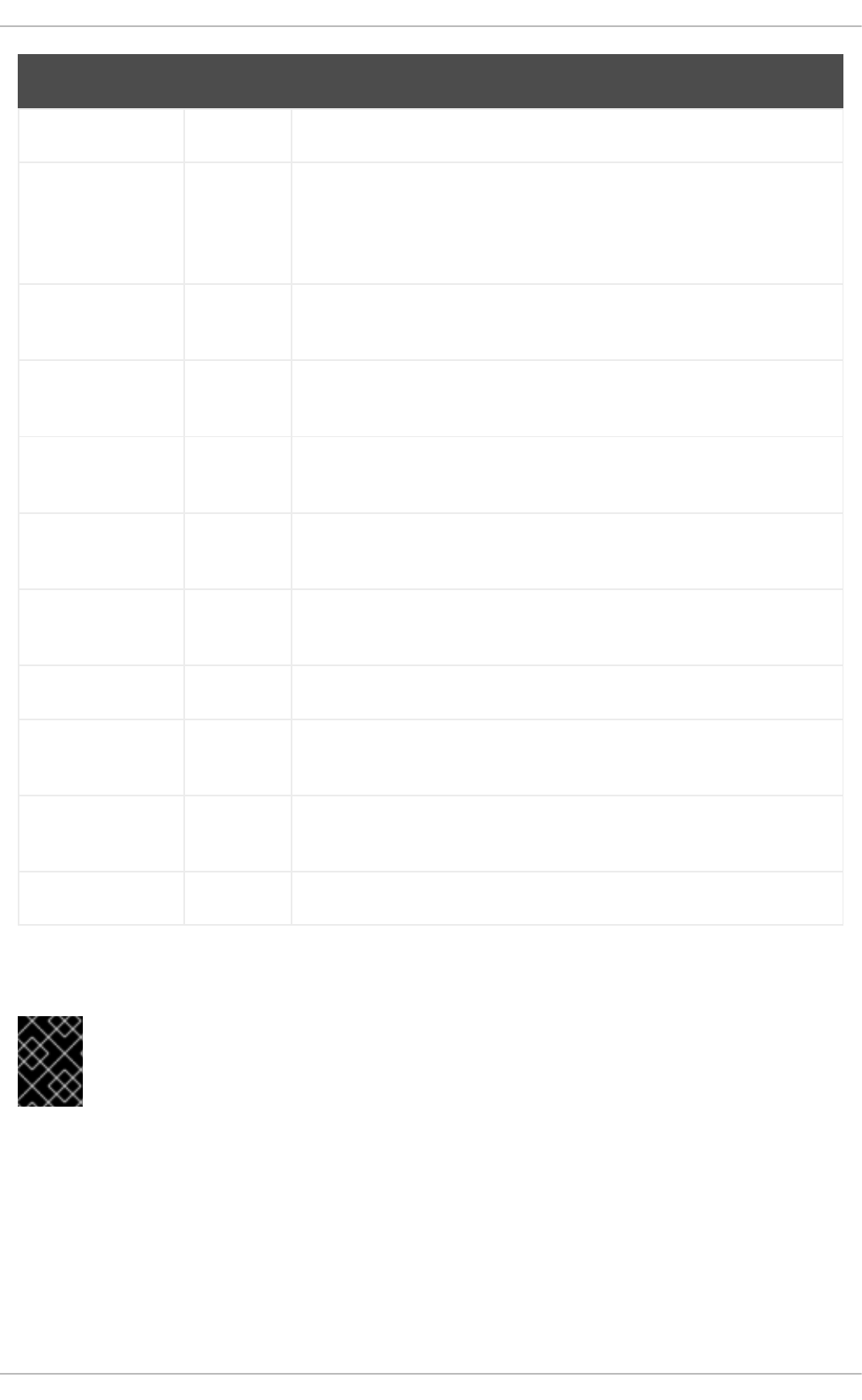

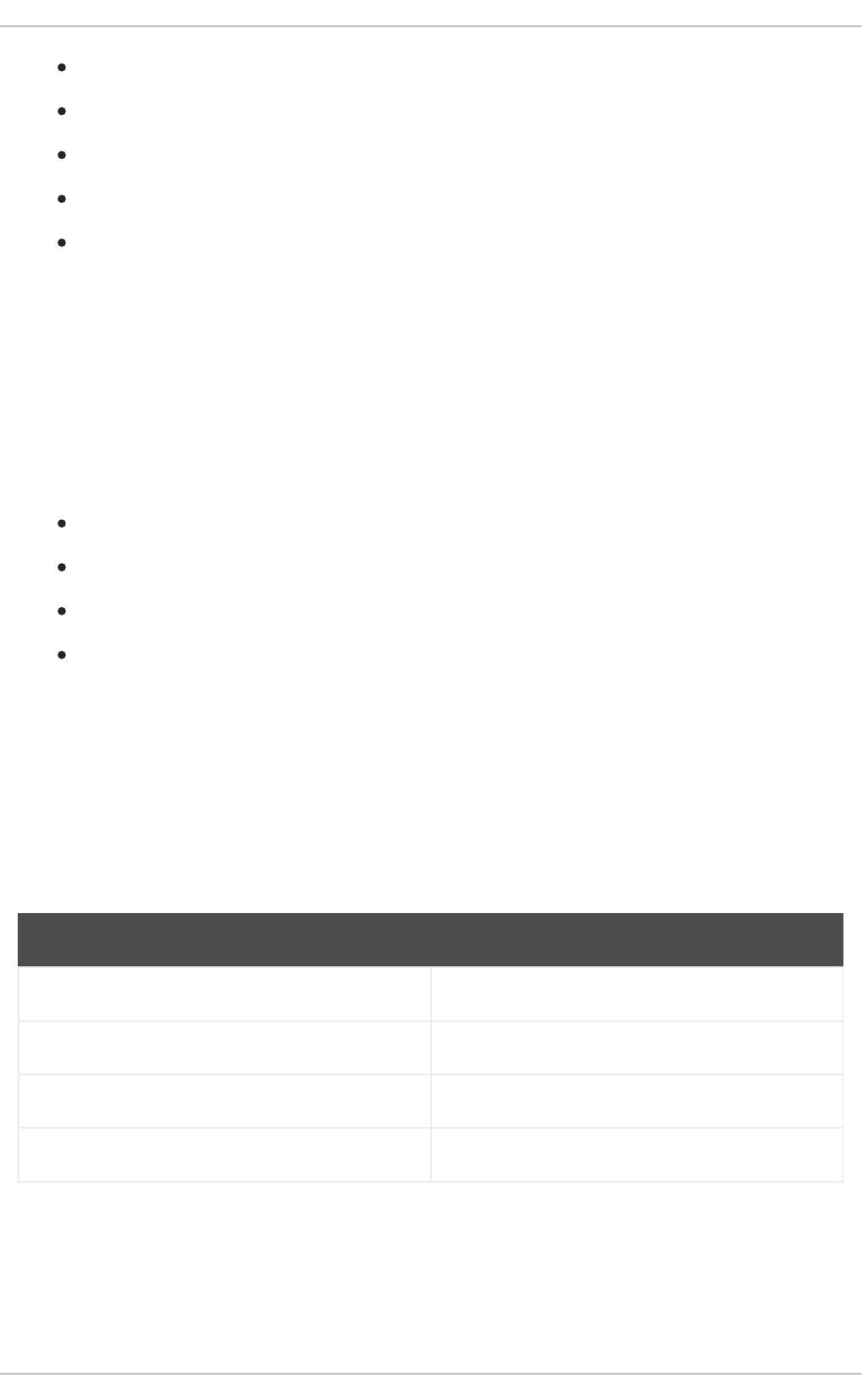

Architecture name Value

64-bit Intel and AMD x86_64

64-bit ARM aarch64

IBM POWER ppc64le

64-bit IBM Z s390x

1.3. SETTING UP TO MANAGE APPLICATION VERSIONS

Effective version control is essential to all multi-developer projects. Red Hat Enterprise Linux is shipped

with Git, a distributed version control system.

Procedure

1. Install the git package:

# yum install git

2. Optional: Set the full name and email address associated with your Git commits:

Red Hat Enterprise Linux 8 Developing C and C++ applications in RHEL 8

6

$ git config --global user.name "Full Name"

$ git config --global user.email "email@example.com"

Replace Full Name and email@example.com with your actual name and email address.

3. Optional: To change the default text editor started by Git, set value of the core.editor

configuration option:

$ git config --global core.editor command

Replace command with the command to be used to start the selected text editor.

Additional resources

Linux manual pages for Git and tutorials:

$ man git

$ man gittutorial

$ man gittutorial-2

Note that many Git commands have their own manual pages. As an example see git-commit(1).

Git User’s Manual — HTML documentation for Git is located at /usr/share/doc/git/user-

manual.html.

Pro Git — The online version of the Pro Git book provides a detailed description of Git, its

concepts, and its usage.

Reference — Online version of the Linux manual pages for Git

1.4. SETTING UP TO DEVELOP APPLICATIONS USING C AND C++

Red Hat Enterprise Linux includes tools for creating C and C++ applications.

Prerequisites

The debug and source repositories must be enabled.

Procedure

1. Install the Development Tools package group including GNU Compiler Collection (GCC), GNU

Debugger (GDB), and other development tools:

# yum group install "Development Tools"

2. Install the LLVM-based toolchain including the clang compiler and lldb debugger:

# yum install llvm-toolset

3. Optional: For Fortran dependencies, install the GNU Fortran compiler:

# yum install gcc-gfortran

CHAPTER 1. SETTING UP A DEVELOPMENT WORKSTATION

7

1.5. SETTING UP TO DEBUG APPLICATIONS

Red Hat Enterprise Linux offers multiple debugging and instrumentation tools to analyze and

troubleshoot internal application behavior.

Prerequisites

The debug and source repositories must be enabled.

Procedure

1. Install the tools useful for debugging:

# yum install gdb valgrind systemtap ltrace strace

2. Install the yum-utils package in order to use the debuginfo-install tool:

# yum install yum-utils

3. Run a SystemTap helper script for setting up the environment.

# stap-prep

Note that stap-prep installs packages relevant to the currently running kernel, which might not

be the same as the actually installed kernel(s). To ensure stap-prep installs the correct kernel-

debuginfo and kernel-headers packages, double-check the current kernel version by using the

uname -r command and reboot your system if necessary.

4. Make sure SELinux policies allow the relevant applications to run not only normally, but in the

debugging situations, too. For more information, see Using SELinux.

Additional resources

Section 3.1, “Enabling Debugging with Debugging Information”

1.6. SETTING UP TO MEASURE PERFORMANCE OF APPLICATIONS

Red Hat Enterprise Linux includes several applications that can help a developer identify the causes of

application performance loss.

Prerequisites

The debug and source repositories must be enabled.

Procedure

1. Install the tools for performance measurement:

# yum install perf papi pcp-zeroconf valgrind strace sysstat systemtap

2. Run a SystemTap helper script for setting up the environment.

# stap-prep

Red Hat Enterprise Linux 8 Developing C and C++ applications in RHEL 8

8

Note that stap-prep installs packages relevant to the currently running kernel, which might not

be the same as the actually installed kernel(s). To ensure stap-prep installs the correct kernel-

debuginfo and kernel-headers packages, double-check the current kernel version by using the

uname -r command and reboot your system if necessary.

3. Enable and start the Performance Co-Pilot (PCP) collector service:

# systemctl enable pmcd && systemctl start pmcd

CHAPTER 1. SETTING UP A DEVELOPMENT WORKSTATION

9

CHAPTER 2. CREATING C OR C++ APPLICATIONS

2.1. BUILDING CODE WITH GCC

Learn about situations where source code must be transformed into executable code.

2.1.1. Relationship between code forms

Prerequisites

Understanding the concepts of compiling and linking

Possible code forms

The C and C++ languages have three forms of code:

Source code written in the C or C++ language, present as plain text files.

The files typically use extensions such as .c, .cc, .cpp, .h, .hpp, .i, .inc. For a complete list of

supported extensions and their interpretation, see the gcc manual pages:

$ man gcc

Object code, created by compiling the source code with a compiler. This is an intermediate

form.

The object code files use the .o extension.

Executable code, created by linking object code with a linker.

Linux application executable files do not use any file name extension. Shared object (library)

executable files use the .so file name extension.

NOTE

Library archive files for static linking also exist. This is a variant of object code that uses

the .a file name extension. Static linking is not recommended. See Section 2.2.2, “Static

and dynamic linking”.

Handling of code forms in GCC

Producing executable code from source code is performed in two steps, which require different

applications or tools. GCC can be used as an intelligent driver for both compilers and linkers. This allows

you to use a single gcc command for any of the required actions (compiling and linking). GCC

automatically selects the actions and their sequence:

1. Source files are compiled to object files.

2. Object files and libraries are linked (including the previously compiled sources).

It is possible to run GCC so that it performs only compiling, only linking, or both compiling and linking in

a single step. This is determined by the types of inputs and requested type of output(s).

Because larger projects require a build system which usually runs GCC separately for each action, it is

better to always consider compilation and linking as two distinct actions, even if GCC can perform both

at once.

Red Hat Enterprise Linux 8 Developing C and C++ applications in RHEL 8

10

Additional resources

Section 2.1.2, “Compiling source files to object code”

Section 2.1.6, “Linking code to create executable files”

Example: Building a C program with GCC (compiling and linking in one step)

Example: Building a C program with GCC (compiling and linking in two steps)

2.1.2. Compiling source files to object code

To create object code files from source files and not an executable file immediately, GCC must be

instructed to create only object code files as its output. This action represents the basic operation of the

build process for larger projects.

Prerequisites

C or C++ source code file(s)

GCC installed on the system

Procedure

1. Change to the directory containing the source code file(s).

2. Run gcc with the -c option:

$ gcc -c source.c another_source.c

Object files are created, with their file names reflecting the original source code files: source.c

results in source.o.

NOTE

With C++ source code, replace the gcc command with g++ for convenient

handling of C++ Standard Library dependencies.

Additional resources

Section 2.1.5, “Options for hardening code with GCC”

Section 2.1.4, “Code optimization with GCC”

Section 2.1.7, “Example: Building a C program with GCC (compiling and linking in one step)”

2.1.3. Enabling debugging of C and C++ applications with GCC

Because debugging information is large, it is not included in executable files by default. To enable

debugging of your C and C++ applications with it, you must explicitly instruct the compiler to create it.

To enable creation of debugging information with GCC when compiling and linking code, use the -g

option:

$ gcc ... -g ...

CHAPTER 2. CREATING C OR C++ APPLICATIONS

11

Optimizations performed by the compiler and linker can result in executable code which is hard

to relate to the original source code: variables may be optimized out, loops unrolled, operations

merged into the surrounding ones, and so on. This affects debugging negatively. For improved

debugging experience, consider setting the optimization with the -Og option. However,

changing the optimization level changes the executable code and may change the actual

behaviour including removing some bugs.

To also include macro definitions in the debug information, use the -g3 option instead of -g.

The -fcompare-debug GCC option tests code compiled by GCC with debug information and

without debug information. The test passes if the resulting two binary files are identical. This

test ensures that executable code is not affected by any debugging options, which further

ensures that there are no hidden bugs in the debug code. Note that using the -fcompare-debug

option significantly increases compilation time. See the GCC manual page for details about this

option.

Additional resources

Section 3.1, “Enabling Debugging with Debugging Information”

Using the GNU Compiler Collection (GCC) — Options for Debugging Your Program

Debugging with GDB — Debugging Information in Separate Files

The GCC manual page:

$ man gcc

2.1.4. Code optimization with GCC

A single program can be transformed into more than one sequence of machine instructions. You can

achieve a more optimal result if you allocate more resources to analyzing the code during compilation.

With GCC, you can set the optimization level using the -Olevel option. This option accepts a set of

values in place of the level.

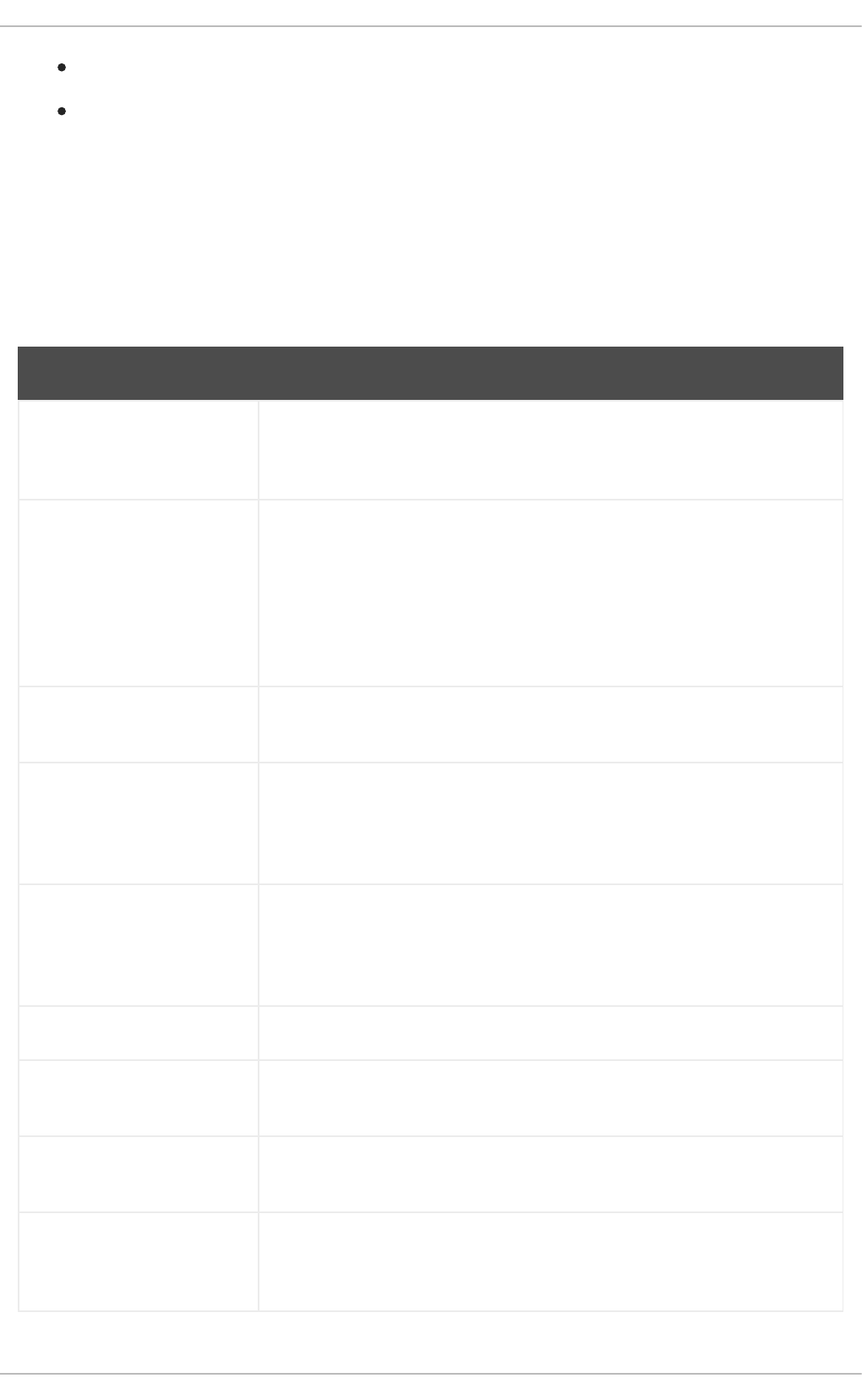

Level Description

0 Optimize for compilation speed - no code optimization (default).

1, 2, 3 Optimize to increase code execution speed (the larger the number, the greater the speed).

s Optimize for file size.

fast Same as a level 3 setting, plus fast disregards strict standards compliance to allow for

additional optimizations.

g Optimize for debugging experience.

For release builds, use the optimization option -O2.

During development, the -Og option is useful for debugging the program or library in some situations.

Red Hat Enterprise Linux 8 Developing C and C++ applications in RHEL 8

12

During development, the -Og option is useful for debugging the program or library in some situations.

Because some bugs manifest only with certain optimization levels, test the program or library with the

release optimization level.

GCC offers a large number of options to enable individual optimizations. For more information, see the

following Additional resources.

Additional resources

Using GNU Compiler Collection — Options That Control Optimization

Linux manual page for GCC:

$ man gcc

2.1.5. Options for hardening code with GCC

When the compiler transforms source code to object code, it can add various checks to prevent

commonly exploited situations and increase security. Choosing the right set of compiler options can

help produce more secure programs and libraries, without having to change the source code.

Release version options

The following list of options is the recommended minimum for developers targeting Red Hat

Enterprise Linux:

$ gcc ... -O2 -g -Wall -Wl,-z,now,-z,relro -fstack-protector-strong -fstack-clash-protection -

D_FORTIFY_SOURCE=2 ...

For programs, add the -fPIE and -pie Position Independent Executable options.

For dynamically linked libraries, the mandatory -fPIC (Position Independent Code) option

indirectly increases security.

Development options

Use the following options to detect security flaws during development. Use these options in conjunction

with the options for the release version:

$ gcc ... -Walloc-zero -Walloca-larger-than -Wextra -Wformat-security -Wvla-larger-than ...

Additional resources

Defensive Coding Guide

Memory Error Detection Using GCC — Red Hat Developers Blog post

2.1.6. Linking code to create executable files

Linking is the final step when building a C or C++ application. Linking combines all object files and

libraries into an executable file.

Prerequisites

CHAPTER 2. CREATING C OR C++ APPLICATIONS

13

One or more object file(s)

GCC must be installed on the system

Procedure

1. Change to the directory containing the object code file(s).

2. Run gcc:

$ gcc ... objfile.o another_object.o ... -o executable-file

An executable file named executable-file is created from the supplied object files and libraries.

To link additional libraries, add the required options after the list of object files. For more

information, see Section 2.2, “Using Libraries with GCC” .

NOTE

With C++ source code, replace the gcc command with g++ for convenient

handling of C++ Standard Library dependencies.

Additional resources

Section 2.1.7, “Example: Building a C program with GCC (compiling and linking in one step)”

Section 2.2.2, “Static and dynamic linking”

2.1.7. Example: Building a C program with GCC (compiling and linking in one step)

This example shows the exact steps to build a simple sample C program.

In this example, compiling and linking the code is done in one step.

Prerequisites

You must understand how to use GCC.

Procedure

1. Create a directory hello-c and change to it:

$ mkdir hello-c

$ cd hello-c

2. Create file hello.c with the following contents:

#include <stdio.h>

int main() {

printf("Hello, World!\n");

return 0;

}

Red Hat Enterprise Linux 8 Developing C and C++ applications in RHEL 8

14

3. Compile and link the code with GCC:

$ gcc hello.c -o helloworld

This compiles the code, creates the object file hello.o, and links the executable file helloworld

from the object file.

4. Run the resulting executable file:

$ ./helloworld

Hello, World!

Additional resources

Section 2.4.2, “Example: Building a C program using a Makefile”

2.1.8. Example: Building a C program with GCC (compiling and linking in two steps)

This example shows the exact steps to build a simple sample C program.

In this example, compiling and linking the code are two separate steps.

Prerequisites

You must understand how to use GCC.

Procedure

1. Create a directory hello-c and change to it:

$ mkdir hello-c

$ cd hello-c

2. Create file hello.c with the following contents:

3. Compile the code with GCC:

$ gcc -c hello.c

The object file hello.o is created.

4. Link an executable file helloworld from the object file:

$ gcc hello.o -o helloworld

5. Run the resulting executable file:

#include <stdio.h>

int main() {

printf("Hello, World!\n");

return 0;

}

CHAPTER 2. CREATING C OR C++ APPLICATIONS

15

$ ./helloworld

Hello, World!

Additional resources

Section 2.4.2, “Example: Building a C program using a Makefile”

2.1.9. Example: Building a C++ program with GCC (compiling and linking in one step)

This example shows the exact steps to build a sample minimal C++ program.

In this example, compiling and linking the code is done in one step.

Prerequisites

You must understand the difference between gcc and g++.

Procedure

1. Create a directory hello-cpp and change to it:

$ mkdir hello-cpp

$ cd hello-cpp

2. Create file hello.cpp with the following contents:

3. Compile and link the code with g++:

$ g++ hello.cpp -o helloworld

This compiles the code, creates the object file hello.o, and links the executable file helloworld

from the object file.

4. Run the resulting executable file:

$ ./helloworld

Hello, World!

2.1.10. Example: Building a C++ program with GCC (compiling and linking in two

steps)

This example shows the exact steps to build a sample minimal C++ program.

In this example, compiling and linking the code are two separate steps.

#include <iostream>

int main() {

std::cout << "Hello, World!\n";

return 0;

}

Red Hat Enterprise Linux 8 Developing C and C++ applications in RHEL 8

16

Prerequisites

You must understand the difference between gcc and g++.

Procedure

1. Create a directory hello-cpp and change to it:

$ mkdir hello-cpp

$ cd hello-cpp

2. Create file hello.cpp with the following contents:

3. Compile the code with g++:

$ g++ -c hello.cpp

The object file hello.o is created.

4. Link an executable file helloworld from the object file:

$ g++ hello.o -o helloworld

5. Run the resulting executable file:

$ ./helloworld

Hello, World!

2.2. USING LIBRARIES WITH GCC

Learn about using libraries in code.

2.2.1. Library naming conventions

A special file name convention is used for libraries: a library known as foo is expected to exist as file

libfoo.so or libfoo.a. This convention is automatically understood by the linking input options of GCC,

but not by the output options:

When linking against the library, the library can be specified only by its name foo with the -l

option as -lfoo:

$ gcc ... -lfoo ...

When creating the library, the full file name libfoo.so or libfoo.a must be specified.

#include <iostream>

int main() {

std::cout << "Hello, World!\n";

return 0;

}

CHAPTER 2. CREATING C OR C++ APPLICATIONS

17

Additional resources

Section 2.3.2, “The soname mechanism”

2.2.2. Static and dynamic linking

Developers have a choice of using static or dynamic linking when building applications with fully

compiled languages. It is important to understand the differences between static and dynamic linking,

particularly in the context using the C and C++ languages on Red Hat Enterprise Linux. To summarize,

Red Hat discourages the use of static linking in applications for Red Hat Enterprise Linux.

Comparison of static and dynamic linking

Static linking makes libraries part of the resulting executable file. Dynamic linking keeps these libraries

as separate files.

Dynamic and static linking can be compared in a number of ways:

Resource use

Static linking results in larger executable files which contain more code. This additional code coming

from libraries cannot be shared across multiple programs on the system, increasing file system usage

and memory usage at run time. Multiple processes running the same statically linked program will still

share the code.

On the other hand, static applications need fewer run-time relocations, leading to reduced startup

time, and require less private resident set size (RSS) memory. Generated code for static linking can

be more efficient than for dynamic linking due to the overhead introduced by position-independent

code (PIC).

Security

Dynamically linked libraries which provide ABI compatibility can be updated without changing the

executable files depending on these libraries. This is especially important for libraries provided by

Red Hat as part of Red Hat Enterprise Linux, where Red Hat provides security updates. Static linking

against any such libraries is strongly discouraged.

Compatibility

Static linking appears to provide executable files independent of the versions of libraries provided by

the operating system. However, most libraries depend on other libraries. With static linking, this

dependency becomes inflexible and as a result, both forward and backward compatibility is lost.

Static linking is guaranteed to work only on the system where the executable file was built.

WARNING

Applications linking statically libraries from the GNU C library (glibc) still require

glibc to be present on the system as a dynamic library. Furthermore, the

dynamic library variant of glibc available at the application’s run time must be a

bitwise identical version to that present while linking the application. As a result,

static linking is guaranteed to work only on the system where the executable file

was built.

Support coverage

Red Hat Enterprise Linux 8 Developing C and C++ applications in RHEL 8

18

Most static libraries provided by Red Hat are in the CodeReady Linux Builder channel and not

supported by Red Hat.

Functionality

Some libraries, notably the GNU C Library (glibc), offer reduced functionality when linked statically.

For example, when statically linked, glibc does not support threads and any form of calls to the

dlopen() function in the same program.

As a result of the listed disadvantages, static linking should be avoided at all costs, particularly for whole

applications and the glibc and libstdc++ libraries.

Cases for static linking

Static linking might be a reasonable choice in some cases, such as:

Using a library which is not enabled for dynamic linking.

Fully static linking can be required for running code in an empty chroot environment or

container. However, static linking using the glibc-static package is not supported by Red Hat.

Additional resources

Red Hat Enterprise Linux 8: Application Compatibility GUIDE

Description of the The CodeReady Linux Builder repository in the Package manifest

2.2.3. Using a library with GCC

A library is a package of code which can be reused in your program. A C or C++ library consists of two

parts:

The library code

Header files

Compiling code that uses a library

The header files describe the interface of the library: the functions and variables available in the library.

Information from the header files is needed for compiling the code.

Typically, header files of a library will be placed in a different directory than your application’s code. To

tell GCC where the header files are, use the -I option:

$ gcc ... -Iinclude_path ...

Replace include_path with the actual path to the header file directory.

The -I option can be used multiple times to add multiple directories with header files. When looking for a

header file, these directories are searched in the order of their appearance in the -I options.

Linking code that uses a library

When linking the executable file, both the object code of your application and the binary code of the

library must be available. The code for static and dynamic libraries is present in different forms:

Static libraries are available as archive files. They contain a group of object files. The archive file

CHAPTER 2. CREATING C OR C++ APPLICATIONS

19

Static libraries are available as archive files. They contain a group of object files. The archive file

has a file name extension .a.

Dynamic libraries are available as shared objects. They are a form of an executable file. A shared

object has a file name extension .so.

To tell GCC where the archives or shared object files of a library are, use the -L option:

$ gcc ... -Llibrary_path -lfoo ...

Replace library_path with the actual path to the library directory.

The -L option can be used multiple times to add multiple directories. When looking for a library, these

directories are searched in the order of their -L options.

The order of options matters: GCC cannot link against a library foo unless it knows the directory with

this library. Therefore, use the -L options to specify library directories before using the -l options for

linking against libraries.

Compiling and linking code which uses a library in one step

When the situation allows the code to be compiled and linked in one gcc command, use the options for

both situations mentioned above at once.

Additional resources

Using the GNU Compiler Collection (GCC) — Options for Directory Search

Using the GNU Compiler Collection (GCC) — Options for Linking

2.2.4. Using a static library with GCC

Static libraries are available as archives containing object files. After linking, they become part of the

resulting executable file.

NOTE

Red Hat discourages use of static linking for security reasons. See Section 2.2.2, “Static

and dynamic linking”. Use static linking only when necessary, especially against libraries

provided by Red Hat.

Prerequisites

GCC must be installed on your system.

You must understand static and dynamic linking.

You have a set of source or object files forming a valid program, requiring some static library

foo and no other libraries.

The foo library is available as a file libfoo.a, and no file libfoo.so is provided for dynamic linking.

NOTE

Red Hat Enterprise Linux 8 Developing C and C++ applications in RHEL 8

20

NOTE

Most libraries which are part of Red Hat Enterprise Linux are supported for dynamic

linking only. The steps below only work for libraries which are not enabled for dynamic

linking. See Section 2.2.2, “Static and dynamic linking”.

Procedure

To link a program from source and object files, adding a statically linked library foo, which is to be found

as a file libfoo.a:

1. Change to the directory containing your code.

2. Compile the program source files with headers of the foo library:

$ gcc ... -Iheader_path -c ...

Replace header_path with a path to a directory containing the header files for the foo library.

3. Link the program with the foo library:

$ gcc ... -Llibrary_path -lfoo ...

Replace library_path with a path to a directory containing the file libfoo.a.

4. To run the program later, simply:

$ ./program

WARNING

The -static GCC option related to static linking forbids all dynamic linking. Instead,

use the -Wl,-Bstatic and -Wl,-Bdynamic options to control linker behavior more

precisely. See Section 2.2.6, “Using both static and dynamic libraries with GCC” .

2.2.5. Using a dynamic library with GCC

Dynamic libraries are available as standalone executable files, required at both linking time and run time.

They stay independent of your application’s executable file.

Prerequisites

GCC must be installed on the system.

A set of source or object files forming a valid program, requiring some dynamic library foo and

no other libraries.

The foo library must be available as a file libfoo.so.

Linking a program against a dynamic library

CHAPTER 2. CREATING C OR C++ APPLICATIONS

21

To link a program against a dynamic library foo:

$ gcc ... -Llibrary_path -lfoo ...

When a program is linked against a dynamic library, the resulting program must always load the library at

run time. There are two options for locating the library:

Using a rpath value stored in the executable file itself

Using the LD_LIBRARY_PATH variable at run time

Using a rpath Value Stored in the Executable File

The rpath is a special value saved as a part of an executable file when it is being linked. Later, when the

program is loaded from its executable file, the runtime linker will use the rpath value to locate the library

files.

While linking with GCC, to store the path library_path as rpath:

$ gcc ... -Llibrary_path -lfoo -Wl,-rpath=library_path ...

The path library_path must point to a directory containing the file libfoo.so.

IMPORTANT

Do not add a space after the comma in the -Wl,-rpath= option.

To run the program later:

$ ./program

Using the LD_LIBRARY_PATH environment variable

If no rpath is found in the program’s executable file, the runtime linker will use the LD_LIBRARY_PATH

environment variable. The value of this variable must be changed for each program. This value should

represent the path where the shared library objects are located.

To run the program without rpath set, with libraries present in path library_path:

$ export LD_LIBRARY_PATH=library_path:$LD_LIBRARY_PATH

$ ./program

Leaving out the rpath value offers flexibility, but requires setting the LD_LIBRARY_PATH variable

every time the program is to run.

Placing the Library into the Default Directories

The runtime linker configuration specifies a number of directories as a default location of dynamic

library files. To use this default behaviour, copy your library to the appropriate directory.

A full description of the dynamic linker behavior is out of scope of this document. For more information,

see the following resources:

Linux manual pages for the dynamic linker:

Red Hat Enterprise Linux 8 Developing C and C++ applications in RHEL 8

22

$ man ld.so

Contents of the /etc/ld.so.conf configuration file:

$ cat /etc/ld.so.conf

Report of the libraries recognized by the dynamic linker without additional configuration, which

includes the directories:

$ ldconfig -v

2.2.6. Using both static and dynamic libraries with GCC

Sometimes it is required to link some libraries statically and some dynamically. This situation brings some

challenges.

Prerequisites

Understanding static and dynamic linking

Introduction

gcc recognizes both dynamic and static libraries. When the -lfoo option is encountered, gcc will first

attempt to locate a shared object (a .so file) containing a dynamically linked version of the foo library,

and then look for the archive file (.a) containing a static version of the library. Thus, the following

situations can result from this search:

Only the shared object is found, and gcc links against it dynamically.

Only the archive is found, and gcc links against it statically.

Both the shared object and archive are found, and by default, gcc selects dynamic linking

against the shared object.

Neither shared object nor archive is found, and linking fails.

Because of these rules, the best way to select the static or dynamic version of a library for linking is

having only that version found by gcc. This can be controlled to some extent by using or leaving out

directories containing the library versions, when specifying the -Lpath options.

Additionally, because dynamic linking is the default, the only situation where linking must be explicitly

specified is when a library with both versions present should be linked statically. There are two possible

resolutions:

Specifying the static libraries by file path instead of the -l option

Using the -Wl option to pass options to the linker

Specifying the static libraries by file

Usually, gcc is instructed to link against the foo library with the -lfoo option. However, it is possible to

specify the full path to file libfoo.a containing the library instead:

$ gcc ... path/to/libfoo.a ...

CHAPTER 2. CREATING C OR C++ APPLICATIONS

23

From the file extension .a, gcc will understand that this is a library to link with the program. However,

specifying the full path to the library file is a less flexible method.

Using the -Wl option

The gcc option -Wl is a special option for passing options to the underlying linker. Syntax of this option

differs from the other gcc options. The -Wl option is followed by a comma-separated list of linker

options, while other gcc options require space-separated list of options.

The ld linker used by gcc offers the options -Bstatic and -Bdynamic to specify whether libraries

following this option should be linked statically or dynamically, respectively. After passing -Bstatic and a

library to the linker, the default dynamic linking behaviour must be restored manually for the following

libraries to be linked dynamically with the -Bdynamic option.

To link a program, link library first statically (libfirst.a) and second dynamically (libsecond.so):

$ gcc ... -Wl,-Bstatic -lfirst -Wl,-Bdynamic -lsecond ...

NOTE

gcc can be configured to use linkers other than the default ld.

Additional resources

Using the GNU Compiler Collection (GCC) — 3.14 Options for Linking

Documentation for binutils 2.27 — 2.1 Command Line Options

2.3. CREATING LIBRARIES WITH GCC

Learn about the steps to creating libraries and the necessary concepts used by the Linux operating

system for libraries.

2.3.1. Library naming conventions

A special file name convention is used for libraries: a library known as foo is expected to exist as file

libfoo.so or libfoo.a. This convention is automatically understood by the linking input options of GCC,

but not by the output options:

When linking against the library, the library can be specified only by its name foo with the -l

option as -lfoo:

$ gcc ... -lfoo ...

When creating the library, the full file name libfoo.so or libfoo.a must be specified.

Additional resources

Section 2.3.2, “The soname mechanism”

2.3.2. The soname mechanism

Dynamically loaded libraries (shared objects) use a mechanism called soname to manage multiple

compatible versions of a library.

Red Hat Enterprise Linux 8 Developing C and C++ applications in RHEL 8

24

Prerequisites

You must understand dynamic linking and libraries.

You must understand the concept of ABI compatibility.

You must understand library naming conventions.

You must understand symbolic links.

Problem introduction

A dynamically loaded library (shared object) exists as an independent executable file. This makes it

possible to update the library without updating the applications that depend on it. However, the

following problems arise with this concept:

Identification of the actual version of the library

Need for multiple versions of the same library present

Signalling ABI compatibility of each of the multiple versions

The soname mechanism

To resolve this, Linux uses a mechanism called soname.

A foo library version X.Y is ABI-compatible with other versions with the same value of X in a version

number. Minor changes preserving compatibility increase the number Y. Major changes that break

compatibility increase the number X.

The actual foo library version X.Y exists as a file libfoo.so.x.y. Inside the library file, a soname is recorded

with value libfoo.so.x to signal the compatibility.

When applications are built, the linker looks for the library by searching for the file libfoo.so. A symbolic

link with this name must exist, pointing to the actual library file. The linker then reads the soname from

the library file and records it into the application executable file. Finally, the linker creates the application

that declares dependency on the library using the soname, not a name or a file name.

When the runtime dynamic linker links an application before running, it reads the soname from

application’s executable file. This soname is libfoo.so.x. A symbolic link with this name must exist,

pointing to the actual library file. This allows loading the library, regardless of the Y component of a

version, because the soname does not change.

NOTE

The Y component of the version number is not limited to just a single number.

Additionally, some libraries encode their version in their name.

Reading soname from a file

To display the soname of a library file somelibrary:

$ objdump -p somelibrary | grep SONAME

Replace somelibrary with the actual file name of the library you wish to examine.

CHAPTER 2. CREATING C OR C++ APPLICATIONS

25

2.3.3. Creating dynamic libraries with GCC

Dynamically linked libraries (shared objects) allow:

resource conservation through code reuse

increased security by making it easier to update the library code

Follow these steps to build and install a dynamic library from source.

Prerequisites

You must understand the soname mechanism.

GCC must be installed on the system.

You must have source code for a library.

Procedure

1. Change to the directory with library sources.

2. Compile each source file to an object file with the Position independent code option -fPIC:

$ gcc ... -c -fPIC some_file.c ...

The object files have the same file names as the original source code files, but their extension is

.o.

3. Link the shared library from the object files:

$ gcc -shared -o libfoo.so.x.y -Wl,-soname,libfoo.so.x some_file.o ...

The used major version number is X and minor version number Y.

4. Copy the libfoo.so.x.y file to an appropriate location, where the system’s dynamic linker can

find it. On Red Hat Enterprise Linux, the directory for libraries is /usr/lib64:

# cp libfoo.so.x.y /usr/lib64

Note that you need root permissions to manipulate files in this directory.

5. Create the symlink structure for soname mechanism:

# ln -s libfoo.so.x.y libfoo.so.x

# ln -s libfoo.so.x libfoo.so

Additional resources

The Linux Documentation Project — Program Library HOWTO — 3. Shared Libraries

2.3.4. Creating static libraries with GCC and ar

Creating libraries for static linking is possible through conversion of object files into a special type of

Red Hat Enterprise Linux 8 Developing C and C++ applications in RHEL 8

26

Creating libraries for static linking is possible through conversion of object files into a special type of

archive file.

NOTE

Red Hat discourages the use of static linking for security reasons. Use static linking only

when necessary, especially against libraries provided by Red Hat. See Section 2.2.2,

“Static and dynamic linking” for more details.

Prerequisites

GCC and binutils must be installed on the system.

You must understand static and dynamic linking.

Source file(s) with functions to be shared as a library are available.

Procedure

1. Create intermediate object files with GCC.

$ gcc -c source_file.c ...

Append more source files if required. The resulting object files share the file name but use the

.o file name extension.

2. Turn the object files into a static library (archive) using the ar tool from the binutils package.

$ ar rcs libfoo.a source_file.o ...

File libfoo.a is created.

3. Use the nm command to inspect the resulting archive:

$ nm libfoo.a

4. Copy the static library file to the appropriate directory.

5. When linking against the library, GCC will automatically recognize from the .a file name

extension that the library is an archive for static linking.

$ gcc ... -lfoo ...

Additional resources

Linux manual page for ar(1):

$ man ar

2.4. MANAGING MORE CODE WITH MAKE

The GNU make utility, commonly abbreviated make, is a tool for controlling the generation of

executables from source files. make automatically determines which parts of a complex program have

CHAPTER 2. CREATING C OR C++ APPLICATIONS

27

changed and need to be recompiled. make uses configuration files called Makefiles to control the way

programs are built.

2.4.1. GNU make and Makefile overview

To create a usable form (usually executable files) from the source files of a particular project, perform

several necessary steps. Record the actions and their sequence to be able to repeat them later.

Red Hat Enterprise Linux contains GNU make, a build system designed for this purpose.

Prerequisites

Understanding the concepts of compiling and linking

GNU make

GNU make reads Makefiles which contain the instructions describing the build process. A Makefile

contains multiple rules that describe a way to satisfy a certain condition ( target) with a specific action

(recipe). Rules can hierarchically depend on another rule.

Running make without any options makes it look for a Makefile in the current directory and attempt to

reach the default target. The actual Makefile file name can be one of Makefile, makefile, and

GNUmakefile. The default target is determined from the Makefile contents.

Makefile details

Makefiles use a relatively simple syntax for defining variables and rules, which consists of a target and a

recipe. The target specifies what is the output if a rule is executed. The lines with recipes must start with

the TAB character.

Typically, a Makefile contains rules for compiling source files, a rule for linking the resulting object files,

and a target that serves as the entry point at the top of the hierarchy.

Consider the following Makefile for building a C program which consists of a single file, hello.c.

This example shows that to reach the target all, file hello is required. To get hello, one needs hello.o

(linked by gcc), which in turn is created from hello.c (compiled by gcc).

The target all is the default target because it is the first target that does not start with a period (.).

Running make without any arguments is then identical to running make all, when the current directory

contains this Makefile.

Typical makefile

A more typical Makefile uses variables for generalization of the steps and adds a target "clean" - remove

everything but the source files.

all: hello

hello: hello.o

gcc hello.o -o hello

hello.o: hello.c

gcc -c hello.c -o hello.o

CC=gcc

Red Hat Enterprise Linux 8 Developing C and C++ applications in RHEL 8

28

Adding more source files to such Makefile requires only adding them to the line where the SOURCE

variable is defined.

Additional resources

GNU make: Introduction — 2 An Introduction to Makefiles

Section 2.1, “Building Code with GCC”

2.4.2. Example: Building a C program using a Makefile

Build a sample C program using a Makefile by following the steps in this example.

Prerequisites

You must understand the concepts of Makefiles and make.

Procedure

1. Create a directory hellomake and change to this directory:

$ mkdir hellomake

$ cd hellomake

2. Create a file hello.c with the following contents:

3. Create a file Makefile with the following contents:

CFLAGS=-c -Wall

SOURCE=hello.c

OBJ=$(SOURCE:.c=.o)

EXE=hello

all: $(SOURCE) $(EXE)

$(EXE): $(OBJ)

$(CC) $(OBJ) -o $@

%.o: %.c

$(CC) $(CFLAGS) $< -o $@

clean:

rm -rf $(OBJ) $(EXE)

#include <stdio.h>

int main(int argc, char *argv[]) {

printf("Hello, World!\n");

return 0;

}

CC=gcc

CFLAGS=-c -Wall

SOURCE=hello.c

CHAPTER 2. CREATING C OR C++ APPLICATIONS

29

IMPORTANT

The Makefile recipe lines must start with the tab character! When copying the

text above from the documentation, the cut-and-paste process may paste

spaces instead of tabs. If this happens, correct the issue manually.

4. Run make:

$ make

gcc -c -Wall hello.c -o hello.o

gcc hello.o -o hello

This creates an executable file hello.

5. Run the executable file hello:

$ ./hello

Hello, World!

6. Run the Makefile target clean to remove the created files:

$ make clean

rm -rf hello.o hello

Additional resources

Section 2.1.7, “Example: Building a C program with GCC (compiling and linking in one step)”

Section 2.1.9, “Example: Building a C++ program with GCC (compiling and linking in one step)”

2.4.3. Documentation resources for make

For more information about make, see the resources listed below.

Installed documentation

Use the man and info tools to view manual pages and information pages installed on your

system:

OBJ=$(SOURCE:.c=.o)

EXE=hello

all: $(SOURCE) $(EXE)

$(EXE): $(OBJ)

$(CC) $(OBJ) -o $@

%.o: %.c

$(CC) $(CFLAGS) $< -o $@

clean:

rm -rf $(OBJ) $(EXE)

Red Hat Enterprise Linux 8 Developing C and C++ applications in RHEL 8

30

$ man make

$ info make

Online documentation

The GNU Make Manual hosted by the Free Software Foundation

2.5. CHANGES IN TOOLCHAIN SINCE RHEL 7

The following sections list changes in toolchain since the release of the described components in

Red Hat Enterprise Linux 7. See also Release notes for Red Hat Enterprise Linux 8.0 .

2.5.1. Changes in GCC in RHEL 8

In Red Hat Enterprise Linux 8, the GCC toolchain is based on the GCC 8.2 release series. Notable

changes since Red Hat Enterprise Linux 7 include:

Numerous general optimizations have been added, such as alias analysis, vectorizer

improvements, identical code folding, inter-procedural analysis, store merging optimization

pass, and others.

The Address Sanitizer has been improved.

The Leak Sanitizer for detection of memory leaks has been added.

The Undefined Behavior Sanitizer for detection of undefined behavior has been added.

Debug information can now be produced in the DWARF5 format. This capability is experimental.

The source code coverage analysis tool GCOV has been extended with various improvements.

Support for the OpenMP 4.5 specification has been added. Additionally, the offloading features

of the OpenMP 4.0 specification are now supported by the C, C++, and Fortran compilers.

New warnings and improved diagnostics have been added for static detection of certain likely

programming errors.

Source locations are now tracked as ranges rather than points, which allows much richer

diagnostics. The compiler now offers “fix-it” hints, suggesting possible code modifications. A

spell checker has been added to offer alternative names and ease detecting typos.

Security

GCC has been extended to provide tools to ensure additional hardening of the generated code.

For more details, see Section 2.5.2, “Security enhancements in GCC in RHEL 8” .

Architecture and processor support

Improvements to architecture and processor support include:

Multiple new architecture-specific options for the Intel AVX-512 architecture, a number of its

microarchitectures, and Intel Software Guard Extensions (SGX) have been added.

Code generation can now target the 64-bit ARM architecture LSE extensions, ARMv8.2-A 16-

CHAPTER 2. CREATING C OR C++ APPLICATIONS

31

Code generation can now target the 64-bit ARM architecture LSE extensions, ARMv8.2-A 16-

bit Floating-Point Extensions (FPE), and ARMv8.2-A, ARMv8.3-A, and ARMv8.4-A architecture

versions.

Handling of the -march=native option on the ARM and 64-bit ARM architectures has been

fixed.

Support for the z13 and z14 processors of the 64-bit IBM Z architecture has been added.

Languages and standards

Notable changes related to languages and standards include:

The default standard used when compiling code in the C language has changed to C17 with

GNU extensions.

The default standard used when compiling code in the C++ language has changed to C++14 with

GNU extensions.

The C++ runtime library now supports the C++11 and C++14 standards.

The C++ compiler now implements the C++14 standard with many new features such as variable

templates, aggregates with non-static data member initializers, the extended constexpr

specifier, sized deallocation functions, generic lambdas, variable-length arrays, digit separators,

and others.

Support for the C language standard C11 has been improved: ISO C11 atomics, generic

selections, and thread-local storage are now available.

The new __auto_type GNU C extension provides a subset of the functionality of C++11 auto

keyword in the C language.

The _FloatN and _FloatNx type names specified by the ISO/IEC TS 18661-3:2015 standard are

now recognized by the C front end.

The default standard used when compiling code in the C language has changed to C17 with

GNU extensions. This has the same effect as using the --std=gnu17 option. Previously, the

default was C89 with GNU extensions.

GCC can now experimentally compile code using the C++17 language standard and certain

features from the C++20 standard.

Passing an empty class as an argument now takes up no space on the Intel 64 and AMD64

architectures, as required by the platform ABI. Passing or returning a class with only deleted

copy and move constructors now uses the same calling convention as a class with a non-trivial

copy or move constructor.

The value returned by the C++11 alignof operator has been corrected to match the C _Alignof

operator and return minimum alignment. To find the preferred alignment, use the GNU

extension __alignof__.

The main version of the libgfortran library for Fortran language code has been changed to 5.

Support for the Ada (GNAT), GCC Go, and Objective C/C++ languages has been removed. Use

the Go Toolset for Go code development.

Additional resources

Red Hat Enterprise Linux 8 Developing C and C++ applications in RHEL 8

32

See also the Red Hat Enterprise Linux 8 Release Notes .

Using Go Toolset

2.5.2. Security enhancements in GCC in RHEL 8

This following are changes in GCC related to security and added since the release of Red Hat

Enterprise Linux 7.0.

New warnings

These warning options have been added:

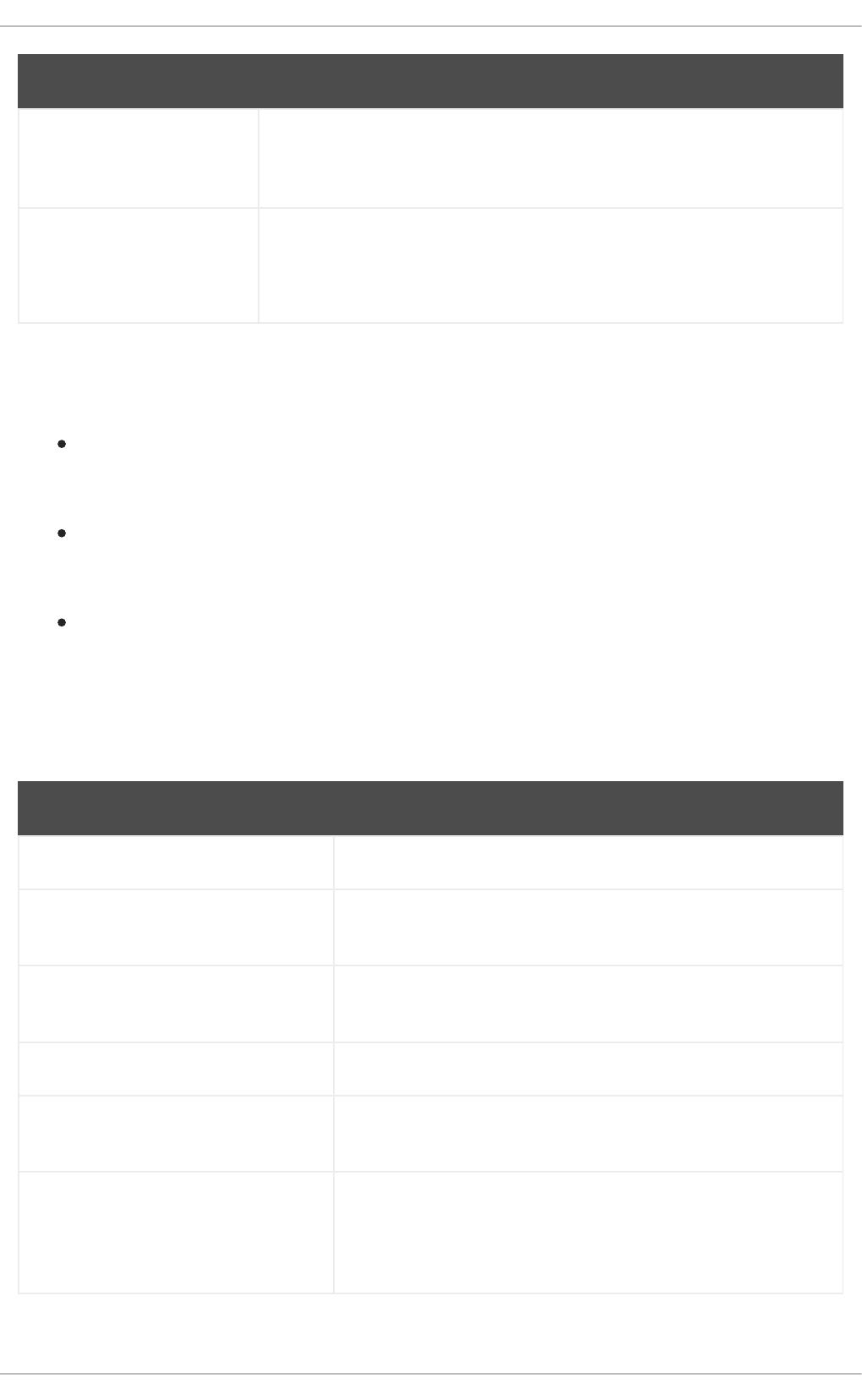

Option Displays warnings for

-Wstringop-truncation Calls to bounded string manipulation functions such as strncat, strncpy,

and stpncpy that might either truncate the copied string or leave the

destination unchanged.

-Wclass-memaccess Objects of non-trivial class types manipulated in potentially unsafe ways by

raw memory functions such as memcpy or realloc.

The warning helps detect calls that bypass user-defined constructors or

copy-assignment operators, corrupt virtual table pointers, data members of

const-qualified types or references, or member pointers. The warning also

detects calls that would bypass access controls to data members.

-Wmisleading-

indentation

Places where the indentation of the code gives a misleading idea of the block

structure of the code to a human reader.

-Walloc-size-larger-

than=size

Calls to memory allocation functions where the amount of memory to

allocate exceeds size. Works also with functions where the allocation is

specified by multiplying two parameters and with any functions decorated

with attribute alloc_size.

-Walloc-zero Calls to memory allocation functions that attempt to allocate zero amount of

memory. Works also with functions where the allocation is specified by

multiplying two parameters and with any functions decorated with attribute

alloc_size.

-Walloca All calls to the alloca function.

-Walloca-larger-

than=size

Calls to the alloca function where the requested memory is more than size.

-Wvla-larger-than=size Definitions of Variable Length Arrays (VLA) that can either exceed the

specified size or whose bound is not known to be sufficiently constrained.

-Wformat-overflow=level Both certain and likely buffer overflow in calls to the sprintf family of

formatted output functions. For more details and explanation of the level

value, see the gcc(1) manual page.

CHAPTER 2. CREATING C OR C++ APPLICATIONS

33

-Wformat-

truncation=level

Both certain and likely output truncation in calls to the snprintf family of

formatted output functions. For more details and explanation of the level

value, see the gcc(1) manual page.

-Wstringop-

overflow=type

Buffer overflow in calls to string handling functions such as memcpy and

strcpy. For more details and explanation of the level value, see the gcc(1)

manual page.

Option Displays warnings for

Warning improvements

These GCC warnings have been improved:

The -Warray-bounds option has been improved to detect more instances of out-of-bounds

array indices and pointer offsets. For example, negative or excessive indices into flexible array

members and string literals are detected.

The -Wrestrict option introduced in GCC 7 has been enhanced to detect many more instances

of overlapping accesses to objects via restrict-qualified arguments to standard memory and

string manipulation functions such as memcpy and strcpy.

The -Wnonnull option has been enhanced to detect a broader set of cases of passing null

pointers to functions that expect a non-null argument (decorated with attribute nonnull).

New UndefinedBehaviorSanitizer

A new run-time sanitizer for detecting undefined behavior called UndefinedBehaviorSanitizer has been

added. The following options are noteworthy:

Option Check

-fsanitize=float-divide-by-zero Detect floating-point division by zero.

-fsanitize=float-cast-overflow Check that the result of floating-point type to integer conversions

do not overflow.

-fsanitize=bounds Enable instrumentation of array bounds and detect out-of-bounds

accesses.

-fsanitize=alignment Enable alignment checking and detect various misaligned objects.

-fsanitize=object-size Enable object size checking and detect various out-of-bounds

accesses.

-fsanitize=vptr Enable checking of C++ member function calls, member accesses,

and some conversions between pointers to base and derived

classes. Additionally, detect when referenced objects do not have

correct dynamic type.

Red Hat Enterprise Linux 8 Developing C and C++ applications in RHEL 8

34

-fsanitize=bounds-strict Enable strict checking of array bounds. This enables -

fsanitize=bounds and instrumentation of flexible array member-

like arrays.

-fsanitize=signed-integer-

overflow

Diagnose arithmetic overflows even on arithmetic operations with

generic vectors.

-fsanitize=builtin Diagnose at run time invalid arguments to __builtin_clz or

__builtin_ctz prefixed builtins. Includes checks from -

fsanitize=undefined.

-fsanitize=pointer-overflow Perform cheap run-time tests for pointer wrapping. Includes

checks from -fsanitize=undefined.

Option Check

New options for AddressSanitizer

These options have been added to AddressSanitizer:

Option Check

-fsanitize=pointer-compare Warn about comparison of pointers that point to a different

memory object.

-fsanitize=pointer-subtract Warn about subtraction of pointers that point to a different

memory object.

-fsanitize-address-use-after-

scope

Sanitize variables whose address is taken and used after a scope

where the variable is defined.

Other sanitizers and instrumentation

The option -fstack-clash-protection has been added to insert probes when stack space is

allocated statically or dynamically to reliably detect stack overflows and thus mitigate the attack

vector that relies on jumping over a stack guard page provided by the operating system.

A new option -fcf-protection=[full|branch|return|none] has been added to perform code

instrumentation and increase program security by checking that target addresses of control-

flow transfer instructions (such as indirect function call, function return, indirect jump) are valid.

Additional resources

For more details and explanation of the values supplied to some of the options above, see the

gcc(1) manual page:

$ man gcc

CHAPTER 2. CREATING C OR C++ APPLICATIONS

35

2.5.3. Compatibility-breaking changes in GCC in RHEL 8

C++ ABI change in std::string and std::list

The Application Binary Interface (ABI) of the std::string and std::list classes from the libstdc++ library

changed between RHEL 7 (GCC 4.8) and RHEL 8 (GCC 8) to conform to the C++11 standard. The

libstdc++ library supports both the old and new ABI, but some other C++ system libraries do not. As a

consequence, applications that dynamically link against these libraries will need to be rebuilt. This affects

all C++ standard modes, including C++98. It also affects applications built with Red Hat Developer

Toolset compilers for RHEL 7, which kept the old ABI to maintain compatibility with the system libraries.

GCC no longer builds Ada, Go, and Objective C/C++ code

Capability for building code in the Ada (GNAT), GCC Go, and Objective C/C++ languages has been

removed from the GCC compiler.

To build Go code, use the Go Toolset instead.

Red Hat Enterprise Linux 8 Developing C and C++ applications in RHEL 8

36

CHAPTER 3. DEBUGGING APPLICATIONS

Debugging applications is a very wide topic. This part provides a developer with the most common

techniques for debugging in multiple situations.

3.1. ENABLING DEBUGGING WITH DEBUGGING INFORMATION

To debug applications and libraries, debugging information is required. The following sections describe

how to obtain this information.

3.1.1. Debugging information

While debugging any executable code, two types of information allow the tools, and by extension the

programmer, to comprehend the binary code:

the source code text

a description of how the source code text relates to the binary code

Such information is called debugging information.

Red Hat Enterprise Linux uses the ELF format for executable binaries, shared libraries, or debuginfo

files. Within these ELF files, the DWARF format is used to hold the debug information.

To display DWARF information stored within an ELF file, run the readelf -w file command.

IMPORTANT

STABS is an older, less capable format, occasionally used with UNIX. Its use is

discouraged by Red Hat. GCC and GDB provide STABS production and consumption on

a best effort basis only. Some other tools such as Valgrind and elfutils do not work with

STABS.

Additional resources

The DWARF Debugging Standard

3.1.2. Enabling debugging of C and C++ applications with GCC

Because debugging information is large, it is not included in executable files by default. To enable

debugging of your C and C++ applications with it, you must explicitly instruct the compiler to create it.

To enable creation of debugging information with GCC when compiling and linking code, use the -g

option:

$ gcc ... -g ...

Optimizations performed by the compiler and linker can result in executable code which is hard

to relate to the original source code: variables may be optimized out, loops unrolled, operations

merged into the surrounding ones, and so on. This affects debugging negatively. For improved

debugging experience, consider setting the optimization with the -Og option. However,

changing the optimization level changes the executable code and may change the actual

behaviour including removing some bugs.

CHAPTER 3. DEBUGGING APPLICATIONS

37

To also include macro definitions in the debug information, use the -g3 option instead of -g.

The -fcompare-debug GCC option tests code compiled by GCC with debug information and

without debug information. The test passes if the resulting two binary files are identical. This

test ensures that executable code is not affected by any debugging options, which further

ensures that there are no hidden bugs in the debug code. Note that using the -fcompare-debug